Highlights

Artificial Intelligence and Non-Consensual Image Generation Apps

Artificial intelligence applications that create non-consensual intimate images may officially face bans, yet they can still be easily found on Apple’s App Store and Google Play, as outlined in a report by the Tech Transparency Project (TTP).

Search Results Raise Concerns

The report indicates that frequently searched terms like “nudify,” “undress,” and “deepnude” lead to the appearance of apps that can digitally modify images of women. The TTP highlighted that approximately 40% of the apps listed in both the Apple and Google Play Store search results possess the capacity to portray women in nude or scantily clad formats.

A total of 46 apps were found in Apple App Store searches, with 18 of them providing nudifying features. Similarly, 49 apps appeared in Google Play results, including 20 that offered comparable functionalities.

The report also pointed out that some app stores were promoting these apps through advertisements in certain search results, which raises further concerns regarding platform management.

Child Safety Risks

A critical issue raised is the accessibility of these apps. Many are rated “E” for everyone, meaning they are deemed suitable for all age groups, including children. The scale of their usage is notably large; according to TTP, nudify apps have been downloaded around 483 million times and have generated over $122 million in revenue.

Gap Between Policy and Enforcement

Apple and Google both enforce stringent policies against sexually explicit or exploitative content. For instance, Apple’s App Review Guidelines ban “offensive, insensitive, upsetting, intended to disgust, in exceptionally poor taste, or just plain creepy, overtly sexual or pornographic material.”

Likewise, Google prohibits apps that contain or promote sexual content or profanity, including pornography, or any content aimed to be sexually gratifying. However, Google permits limited exceptions for nudity, allowing it when the focus is educational, documentary, scientific, or artistic, provided it is not gratuitous.

Response from Platforms

In light of the report, Google stated it has taken measures against several flagged apps. Dan Jackson, a Google spokesperson, informed TTP that many apps had been suspended and that the company continues its assessment and enforcement activities.

On age ratings, Jackson remarked that the International Age Rating Coalition sets classifications for Google Play apps. Meanwhile, Apple has reportedly removed 15 apps following the report, while Google has taken down seven.

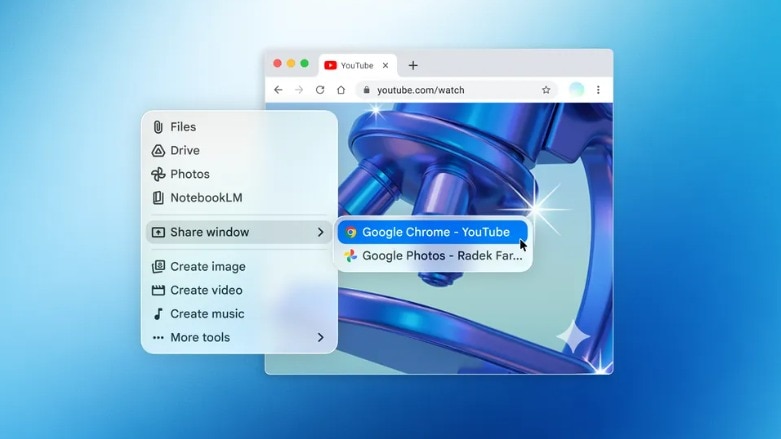

AI Tools Amplifying the Issue

The dilemma extends beyond individual apps to include AI chatbots and integrated tools. The report brought attention to an app named “Uncensored AI — No Filter Chat,” which was available on the App Store as a general AI chat and photo editing application. When evaluated, it produced altered images upon request, claiming that data “may be processed by xAI.”

TTP noted that the developer, Masaki Matsushita from Tokyo, stated that the app utilized xAI’s Grok model for image generation and was unaware it could yield such extreme outcomes. Following these revelations, the developer has increased moderation, rebranded the app to “Chat AI – Simple AI,” and raised its age rating from 16+ to 18+.

This comes amid increased scrutiny of xAI’s Grok, following reports of it generating highly sexualised AI videos, despite assurances of enhanced safeguards.

Broader Implications for Big Tech

The report raises a significant question: why do such apps persistently evade review systems despite explicit policies against non-consensual sexual content? As artificial intelligence tools grow in power and ease of deployment, platforms are under mounting pressure to enhance detection and enforcement, particularly as misuse can escalate rapidly and affect vulnerable individuals.

Startup Superb has contacted Apple and Google for comments, and updates will follow upon receiving their responses.